I'm currently writing a document that I will publish soon on building a low cost lab with VI3. While I was doing some research on VMFS partition alignment, I found that I was unable to create (or delete) partitions on the /dev/sda disk that ESX is installed on. I could make the changes, but as I tried to write the changes to disk, fdisk would come back with the following error:

SCSI disk error : host 1 channel 0 id 0 lun 0 return code = be0000

I/O error: dev 08:00, sector 40355342

Device busy for revalidation (usage=8)

I know that I could just use the VI client to create this partition, but as I'm busy writing this fairly detailed document, I wanted to show potential readers how to create such an aligned local VMFS3 partition using fdisk and vmkfstools on the /dev/sda device.

Then I also had the question in my mind... "If the VI client can do it, why can't I?"

Anyway, after playing around with ESX a little, I found a fix. Use with caution though!

You need to remove the lock that ESX has on the device! The esxcfg-advcfg command can do this.

Before running fdisk, execute the following command at the service console:

esxcfg-advcfg -s 0 /Disk/PreventVMFSOverwrite

With PreventVMFSOverwrite now switched off, you can use fdisk to write the partition changes without the error. You will still get "device or resource busy" but it will write the changes.

After you have saved the partition changes using fdisk, run the following commands:

partprobe

esxcfg-advcfg -s 1 /Disk/PreventVMFSOverwrite

In the past year, I’ve been doing a lot of testing on running VMware ESX 3.5 on non-supported hardware (hardware that is not included on the VMware HCL). I’ve now got my home lab to an acceptable stage where I can do testing and studying. I’ve spent many hours on forums and blogs trying to find components that are cheap, quiet, fast-enough and most importantly, components that work with ESX 3.5!

Now that I’ve been through the trouble of finding out what works and what doesn’t, I thought it may be a good idea to blog on it and give others that would like to run ESX on cheap hardware a heads up before they go and waste money on stuff that does not work!

Although, I have blogged on this topic a couple of times before in brief, but I think it’s time for a proper post that describes the trails’ and tribulations of building a cheap ESX environment in more detail, with all the do's and don't's!

I've started writing the article as a blog, but I now realise that because of the amount detail I'm putting into it, it's going to cover a lot of pages. I therefore decided to blog some of it on the site, as well as provide a full ebook in PDF format which will contain all the information you'll ever need on running ESX on low cost hardware.

I'm hoping to have the eBook ready for download by early next week.

Thanks for reading!

I've seen cases where a newly extended VMFS datastore fluctuates between the old size and the new size. Virtual Machines running on th newly extended datastore prevents the correct size of the datastore from displaying.

I found the solution to the problem in KB Article 1002558

Products: VMware ESX and ESXi

The solution is:

- Shut down or VMotion virtual machines running on the extended datastore to the ESX host that created the extent.

- On all other ESX hosts other than the one that created the datastore, run vmkfstools -V to re-read the volume information.

- Power on or VMotion the Virtual Machines back to the original ESX hosts.

This is not only on VI3 hosts but on:

VMware ESX 2.0.x

VMware ESX 2.1.x

VMware ESX 2.5.x

VMware ESX 3.0.x

VMware ESX 3.5.x

VMware ESXi 3.5.x Embedded

VMware ESXi 3.5.x Installable

Now this may be old news, but I decided to blog it anyway for my own reference.

Simon Long has written a good article on how to improve VMotion performance when performing mass migrations. This is very handy when you are putting a host into maintenance mode. Thanks to Jason Boche for blogging it first!

I'll set the scene a little...

I’m working late, I’ve just installed Update Manager and I‘m going to run my first updates. Like all new systems, I’m not always confident so I decided “Out of hours” would be the best time to try.

I hit “Remediate” on my first Host then sat back, cup of tea in hand and watch to see what happens….The Host’s VM’s were slowly migrated off 2 at a time onto other Hosts.

“It’s gonna be a long night” I thought to myself. So whilst I was going through my Hosts one at time, I also fired up Google and tried to find out if there was anyway I could speed up the VMotion process. There didn’t seem to be any article or blog posts (that I could find) about improving VMotion Performance so I created a new Servicedesk Job for myself to investigate this further.

3 months later whilst at a product review at VMware UK, I was chatting to their Inside Systems Engineer, Chris Dye, and I asked him if there was a way of increasing the amount of simultaneous VMotions from 2 to something more. He was unsure, so did a little digging and managed to find a little info that might be helpful and fired it across for me to test.

After a few hours of basic testing over the quiet Christmas period, I was able to increase the amount of simultaneous VMotions…Happy Days!!

But after some further testing it seemed as though the amount of simultaneous VMotions is actually set per Host. This means if I set my vCenter server to allow 6 VMotions, I then place 2 Hosts into maintenance mode at the same time, there would actually be 12 VMotions running simultaneously. This is certainly something you should consider when deciding how many VMotions you would like running at once.

Here are the steps to increase the amount of Simultaneous VMotion Migrations per Host.

1. RDP to your vCenter Server.

2. Locate the vpdx.cfg (Default location “C:\Documents and Settings\All Users\Application Data\VMware\VMware VirtualCenter”)

3. Make a Backup of the vpdx.cfg before making any changes

4. Edit the file in using WordPad and insert the following lines between the <vpdx></vpdx> tags;

<ResourceManager>

<maxCostPerHost>12</maxCostPerHost>

</ResourceManager>

5. Now you need to decide what value to give “maxCostPerHost”.

A Cold Migration has a cost of 1 and a Hot Migration aka VMotion has a cost of 4. I first set mine to 12 as I wanted to see if it would now allow 3 VMotions at once, I now permanently have mine set to 24 which gives me 6 simultaneous VMotions per Host (6×4 = 24).

I am unsure on the maximum value that you can use here, the largest I tested was 24.

6. Save your changes and exit WordPad.

7. Restart “VMware VirtualCenter Server” Service to apply the changes.

Now I know how to change the amount of simultaneous VMotions per Host, I decided to run some tests to see if it actually made any difference to the overall VMotion Performance.

I had 2 Host’s with 16 almost identical VM’s. I created a job to Migrate my 16 VM’s from Host 1 to Host 2.

Both Hosts VMotion vmnic was a single 1Gbit nic connected to a CISCO Switch which also has other network traffic on it.

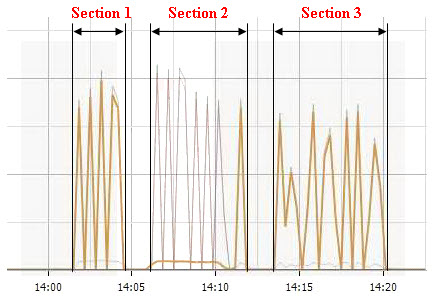

The Network Performance graph above was recorded during my testing and is displaying the “Network Data Transmit” measurement on the VMotion vmnic. The 3 sections highlighted represent the following;

Section 1 - 16 VM’s VMotioned from Host 1 to Host 2 using a maximum of 6 simultaneous VMotions.

Time taken = 3.30

Section 2 - This was not a test, I was simply just migrating the VM’s back onto the Host for the 2nd test (Section 3).

Section 3 - 16 VM’s VMotioned from Host 1 to Host 2 using a maximum of 2 simultaneous VMotions.

Time taken = 6.36

Time Different = 3.06

3 Mins!! I wasn’t expecting it to be that much. Imagine if you had a 50 Host cluster…how much time would it save you?

I tried the same test again but only migrating 6 VM’s instead of 16.

Migrating off 6 VM’s with only 2 simultaneous VMotions allowed.

Time taken = 2.24

Migrating off 6 VM’s with 6 simultaneous VMotions allowed.

Time taken = 1.54

Time Different = 30secs

It’s still an improvement all be it not so big.

Now don’t get me wrong, these tests are hardly scientific and would never have been deemed as completely fair test but I think you get the general idea of what I was trying to get at.

I’m hoping to explore VMotion Performance further by looking at maybe using multiple physical nics for VMotion and Teaming them using EtherChannel or maybe even using 10Gbit Ethernet. Right now I don’t have the spare Hardware to do that but this is definitely something I will try when the opportunity arises.

As we are now busy doing P2V conversion of most of our physical servers I needed to use the Consolidation function in vCenter (previously known as VirtualCenter) to assess the workload of the physical servers (CPU and Memory Usage).

I was surprised to see that the "Consolidation" button disappeared from my VI Client! I checked if it was installed as a plugin and it was. I also checked if it was enabled and again, it was. However, the button was not there!

I found that although the plugin can be installed and enabled in the VI client, it is also a requirement to have VMware Capacity Planner installed on the vCenter server. I checked my vCenter server and confirmed that it was indeed already installed.

Here's the fix:

It seems like the Consolidation Function for vCenter is disabled by default in the vpxd.cfg file. To enable the consolidation function, do the following:

On the vCenter server, edit c:\Documents and Settings\All Users\Application Data\VMware\VMware VirtualCenter\vpxd.cfg

(I'm still using VirtualCenter 2.5.0 Build 119598, so your path could be ...\vCenter\cpxd.cfg? I'm not sure!)

You will find the following three lines:

<vcp2v>

<dontStartConsolidation>true</dontStartConsolidation>

</vcp2v>

Change them to read:

<vcp2v>

<dontStartConsolidation>false</dontStartConsolidation>

</vcp2v>

Restart the VirtualCenter service using the Services.msc MMC snapin in Windows. The Consolidation Button should now be available in your VI, Providing that that it's installed and enabled and that VMware Capacity Planner is installed on the vCenter server.

I’ve had a quick play with Veeam Monitor for VMware Infrastructure 3 Free Edition. Veeam Monitor looks like a good product and should come in handy if you have lots of things in your VI to monitor. It is better than the performance monitors in VMware vCenter, but then again it doesn’t take much to beat vCenter’s performance counters in detail.

I’ve installed Veeam Monitor on a Windows Server 2003 box. The installation wizard will give you an option to choose an existing SQL Database Server or to install SQL Express locally. I opted to install it on an existing SQL server. If you’re going to run Veeam Monitor in a production environment, I think it will make more sense to go with this option as well. The installation then went on and automatically created a new database on my SQL server called “Monitor”, so no need to create a database before kicking off the installation.

At first glance, Veeam Monitor looks and feels like a professional application. The GUI is clean and not cluttered with an overload of information. The graphs are easy to read and understand.

Veeam Monitor allows you to add a standalone ESX Server or a VirtualCenter or “vCenter” to monitor. In my case, I pointed it to my vCenter Server and as expected, it pulled the entire VI configuration from the VC, complete with Datacenters, Clulsters, Hosts and Resource Pools.

Now, let’s look at the information it returns. For this article I’m only going to mention the information it returned for a single “Datacenter”, defined in my VC. However, Veeam Monitor will report at a Datacenter, cluster, host, resource pool and Virtual Machine level. When looking at a virtual machine level, Veeam monitor will allow you to access the VM’s console as well as the processes inside the VM’s Guest OS** (**However the processes feature is not included in the Free Edition).

So at the datacenter level, it will return the following sets of information: Summary, Overall, CPU, Memory, Networking, Disk, Swap, Tops, Alarms and Events.

Here’s what they'll all tell you:

The Summary tab provides:

| Events Count | The number of events logged for the Datacenter |

| Performance data period | The time period that performance data are reported on |

The Overall tab provides graphs for:

CPU Usage, Memory Consumed, Network Usage, Disk Usage and Swap Usage.

The CPU, Memory, Networking, Disk and Swap tabs provide information using 3D graphs (providing you selected 3D in the Veeam Monitor Client Options) on datacenter wide utilization. Much the same as vCenter does. It just looks a lot better and easier to read. As expected, you also have the option to select the counters that you would like to see for each graph.

Sorry that I’ve jumped past the CPU, Memory, Networking, Disk and Swap tabs without providing much detail, but it really is very similar to vCenter, at a datacenter level anyway. The Tops tab is the one I really liked. It displays the 3 Virtual Machines that are the top resource users for the last 5 minutes. They are showed as follows:

• Top CPU Users in Percentage

• Top Network Users in KBps

• Top Memory Users in MB

• Top Swap Users in MB

• Top Disk Users in KBps

The Alarms tab displays the alarms defined in VC for the selected VI object. It also shows a history of triggered alarms and gives you the option to create new alarms as well. Another function is to resolve a triggered alarm by adding a comment to the alarm. There’s also a button to clear triggered alarms when “Resolved, later than 1 day, later than 1 week, later than 1 month or all”.

Although I didn’t give an in-depth review of Veeam Monitor (Because I just don’t have all the time in the world right now), I do think it’s a good product.

Just note that there are a few features that are not available in the Free Edition. The ones I came across are:

• No Alarm Modelling

• No reporting

• Only 24 hours of history displayed

• No access to the Guest OS Processes running inside the VM.

All in all, as with Veeam FastSCP, Veeam has got my thumbs up for Veeam Monitor!